Claude Code vs. Gemini CLI vs. Codex CLI: What’s the Best Choice for Internal Tool Development? (2025 Summer Edition)

In 2025, building in-house tools with coding agents is spreading rapidly. Compared with off-the-shelf SaaS, you can spin up tools tailored to your company’s workflows in a short time.

One category drawing particular attention is CLI-based AI tools that let you generate, edit, and run code from the terminal using natural language. The three main options are Claude Code, Gemini CLI, and Codex CLI. Which should you choose?

This article offers a practical comparison to help you pick the best option for your environment.

Why CLI-Based AI Tools?

Managing Context

To control AI behavior and improve the accuracy of the desired outputs, you must manage the information (context) you provide to the AI.

CLIs operate under the premise that “the repository is the world,” so the tool reasons based on the code and documents under your project directory. Claude Code automatically reads CLAUDE.md at the project root (and, if needed, multiple files in a hierarchy) and treats “what and how to build in this project” as strict rules. Because this works like a “system instruction” stronger than regular conversation, it boosts output consistency.

Gemini CLI lets you provide similar project context via GEMINI.md. Its README also explains the mechanism for “custom context files.” Codex CLI recommends injecting context with AGENTS.md or codex.md, reflecting local instructions directly in its behavior.

Long-Term Memory via Saved Files

Chat-style AI tools primarily rely on thread history for long-term memory, but CLI-based tools can load local files whenever needed. This makes it easier to leverage past work for future tasks. By organizing and saving the information you want the AI to remember long-term, you can retain it more effectively.

Compared with thread-based tools, users can more easily participate in curating the AI’s long-term memory.

Leveraging Other CLI Tools and MCP Servers

Chat-style AI tools run on the provider’s servers, which can make integrations with external tools difficult; what you can connect to often depends on provider-supplied connectors.

CLI-based AI tools, on the other hand, run locally and therefore integrate more easily with existing CLI commands and MCP servers. For example, you can tap MCP servers like Playwright for browser automation or MarkItDown, which converts PDFs and PPTX files into AI-friendly Markdown. This makes it easier to extend your AI’s capabilities.

Claude Code: The Most Mature, Production-Grade Option

Claude Code is Anthropic’s CLI-based AI tool. As a pioneer in this space, it stands out for its high customizability and a mature community.

Model Strengths

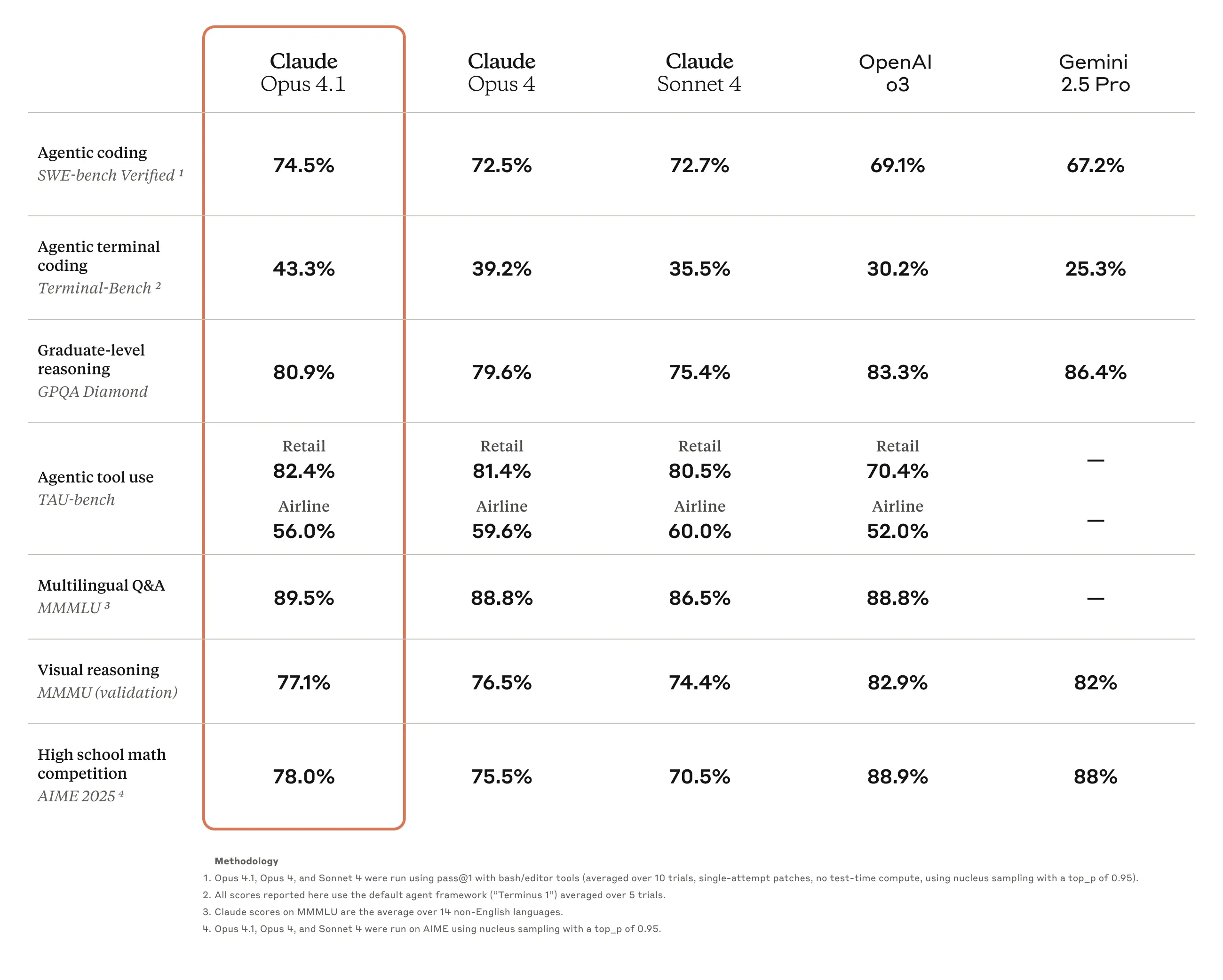

Claude Code primarily uses “Claude 4.1 Opus” and “Claude 4.0 Sonnet.” These models achieve high scores on coding benchmarks, and in practice you’ll likely find their accuracy impressive.

Custom Slash Commands

In Claude Code, you can create custom commands by writing Markdown files under the .claude/commands directory. These commands can be invoked during a chat with Claude Code to automate repetitive tasks. You can also push the commands to GitHub and share them with your team.

You can discover useful community commands in public repositories such as Awesome Claude Code.

Subagents

Claude Code lets you create subagents by writing Markdown files under .claude/agents/. You can call these subagents during a chat to split and handle complex tasks. As with commands, you can push them to GitHub for team sharing.

Unlike custom slash commands, subagents maintain context independent of the main conversation. They’re handy when you don’t want to pollute the main thread’s context or when you want to handle a large, separate context. For example, if you have a repetitive, purpose-specific task whose details don’t need to be fed back into the main conversation, a subagent is ideal.

MCP Server Integrations

Claude Code can integrate with external tools via MCP servers. You can find useful MCP servers in public repos such as Awesome MCP Servers.

It also provides management commands for MCP servers and clearly explains how to scope their usage. See the official docs for details.

Gemini CLI: Speed, Google Search, and a Generous Free Tier

Gemini CLI is Google’s CLI-based AI tool. Its strengths include integration with Google services and the cost-effectiveness of Gemini models.

Model Strengths

The main models used with Gemini CLI are “Gemini 2.5 Pro” and “Gemini 2.5 Flash.” The Gemini 2.5 series is known for fast responses, and the generous free tier is another advantage.

You can also use grounding integrated with Google’s search services, making it an excellent choice if you often need to perform web searches and fact-checking.

MCP Server Integrations

Gemini CLI also supports integrations with MCP servers.

Codex CLI: OpenAI-Made, With Room to Grow

Codex CLI is OpenAI’s CLI-based AI tool. Its strength is access to OpenAI models.

Model Strengths

The main model used with Codex CLI is “GPT-5.” A key characteristic is its automatic “routing”: it decides how to behave internally based on user instructions—handling tasks that require complex reasoning thoroughly while responding quickly to simple tasks. That said, compared with Claude Code or Gemini CLI, you may not always feel a clear accuracy advantage at this point.

As an industry leader, OpenAI ships model updates rapidly, and Codex CLI can take advantage of those quickly—another reason there’s a lot to look forward to.

MCP Server Integrations

Codex CLI also supports MCP server integrations.

The Importance of Starter Templates

The single most important factor when introducing an AI CLI tool is proper initial setup. These tools tend to strongly respect your existing stack and coding rules because they generate code based on a deep understanding of your codebase. Leveraging starter templates like Squadbase Starters lets you steer your coding agent’s behavior and dramatically increase your success rate.

Deploying the Apps You Build

Combining starter templates with an AI CLI tool should enable you to build the tools you want. To share your finished work with the team, however, you’ll need a secure and scalable deployment environment. Squadbase is a platform for deploying internal apps. Because it deploys via Git integration, your team can start operating the tool right away.

Final Thoughts

In the 2025 AI CLI market, Claude Code is a strong choice for enterprise internal tool development. Its security features, rich customization, and production-quality code generation support organization-wide adoption.

Gemini CLI works well as an alternative when budgets are tight or when tight integration with Google’s search services is important. Codex CLI is promising as GPT-5 continues to evolve.

Crucially, success depends not only on the tool you choose but also on building an environment your whole team can use. Leverage a platform like Squadbase to turn tools created with AI CLIs into organization-wide productivity gains.